This article introduces the method I used to back up the data of projects managed in nulab Backlog.

nulab Backlog is simple and easy to use, but when a project becomes large, the issue hierarchy is limited to only two levels, which sometimes makes you want to migrate to another project management tool. In such cases, even if you don’t plan to import Backlog data into another tool, it is reassuring to have a full copy of the project in case you later need to refer to past descriptions. However, simply searching and saving issues does not save attachments. Therefore, I use Python to back up the attachments together.

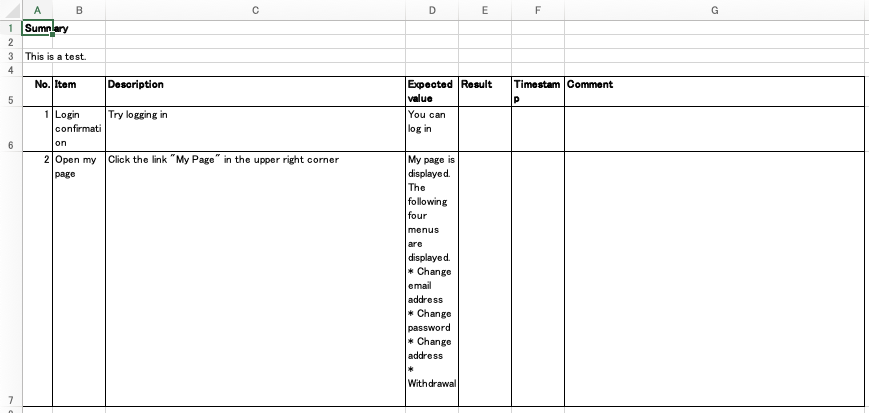

Environment

- Python

Code

First, save the project information and API Key in backlog_credential.py.

|

1 2 3 |

api_key = 'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx' project_key = 'PROJECT' url_base = 'https://xxxxxxxxx.backlog.com' |

Then run the following program. This will save issues, comments, and attachments as JSON.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 |

from backlog_credential import * from pathlib import Path import requests import time import os SLEEP_TIME = 1 DEBUG = False def build_get_url(path, params = {}): url = url_base + path + '?apiKey=' + api_key for key in params: url += '&' + key + '=' + str(params[key]) return url def get_all_projects(): url = build_get_url('/api/v2/projects') response = requests.get(url) response.raise_for_status() return response.json() def get_project_id(project_key): for project in get_all_projects(): if project['projectKey'] == project_key: return project['id'] raise Exception('Project not found') def get_issue_list(project_id, offset = 0): params = { 'projectId[]': project_id, 'offset': offset, 'count': 100, 'sort': 'created', } url = build_get_url('/api/v2/issues', params) result = requests.get(url) # raise exception if not success result.raise_for_status() time.sleep(SLEEP_TIME) if DEBUG: print("headers:", result.headers) return result.json() def get_issue(issue_id): url = build_get_url('/api/v2/issues/' + str(issue_id)) result = requests.get(url) time.sleep(SLEEP_TIME) return result.json() def get_all_issues(project_id, offset=0): offset = 0 issues = [] while True: result = get_issue_list(project_id, offset) issues += result if len(result) < 100: break offset += 100 return issues def get_issue_comments(issue_key): params = { 'count': 100, 'order': 'asc', } url = build_get_url( '/api/v2/issues/' + issue_key + '/comments', params) result = requests.get(url) # raise exception if not success result.raise_for_status() return result.json() def get_attached_file_list(issue_key): url = build_get_url( '/api/v2/issues/' + issue_key + '/attachments') result = requests.get(url) time.sleep(SLEEP_TIME) return result.json() def get_and_save_attached_file(issue_key, attachment_id, filepath): url = build_get_url( '/api/v2/issues/' + issue_key + '/attachments/' + str(attachment_id)) result = requests.get(url) time.sleep(SLEEP_TIME) with open(filepath, 'wb') as f: f.write(result.content) return result # create issue directory, and save the content as content.json def backup_issue(issue, backup_dir): issue_id = issue['issueKey'] issue_path = os.path.join(backup_dir, 'issues', str(issue_id)) if not os.path.exists(issue_path): os.makedirs(issue_path) with open(os.path.join(issue_path, 'content.json'), 'w') as f: f.write(str(issue)) # get all comments and save it as comments.json comments = get_issue_comments(issue_id) with open(os.path.join(issue_path, 'comments.json'), 'w') as f: f.write(str(comments)) # get attached file list and save it as attached_files.json attached_files = get_attached_file_list(issue_id) with open(os.path.join(issue_path, 'attached_files.json'), 'w') as f: f.write(str(attached_files)) # download attached files for file in attached_files: get_and_save_attached_file( issue_id, file['id'], os.path.join(issue_path, file['name'])) def backup_project(project_key, backup_dir='backup'): backup_dir = Path(backup_dir) if not backup_dir.exists(): backup_dir.mkdir() project_id = get_project_id(project_key) issues = get_all_issues(project_id) for issue in issues: backup_issue(issue, backup_dir) if __name__ == '__main__': backup_project(project_key) |

|

1 |

example.py |

This was created around 2024 and is based on the Backlog API at that time.

The function backup_project allows you to pass the backup destination directory as the second argument.